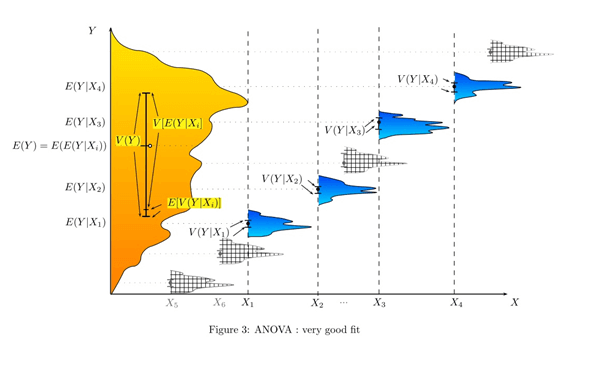

Commonality Analysis (CA)

Commonality Analysis (CA) refers to a set of statistical techniques to identify any systematic causes of yield loss. These techniques typically use association rules and/or ANOVA (Analysis of Variance) to identify manufacturing variables that are common to failed devices. Engineers use these techniques to narrow down the possible cause. This information helps in the decision

to perform further experiments and ad-hoc analysis to identify and ultimately correct the cause. Note like all statistical techniques there exist errors called false positives and false negatives.

Process engineers extensively use commonality analysis in wafer fabs to identify equipment issues or to identify time periods when equipment is systematically underperforming. For IC design firms, engineers can use commonality analysis to identify design collateral and equipment which cause a systematic yield loss or retest rate. In OSATs test equipment engineers conduct a commonality analysis to identify ATEs, probers, and handlers which adversely impact test floor operations as well as customer product’s yield. Within Core yieldWerx, the Reporting & Analysis module enables product and test engineers the tools for their commonality analysis. With these tools, engineers identify problematic photomasks (reticles), test programs, probe cards, equipment, time periods during wafer test or final test

Data availability and lack of clean data adversely impacts the results of a commonality analysis. Accurate and complete data of products traversing the manufacturing and testing environment must be available. Genealogy data refers to how lots or batches of material are split and combined, and history data refers to the tool associations for a specific lot, wafer, die, or device. This data is often stored in multiple systems, including Manufacturing Execution Systems (MES), engineering test programs, and may also be manually recorded, e.g. in spreadsheets. Genealogy and history data are used to identify associations common to underperforming material (e.g. yield excursion, changes to performance bin numbers).

Core yieldWerx provides the infrastructure modules that provide clean data upon which engineers can generate the analysis reports to commence their search for common attributes in underperforming material. Data stored in MES or other business systems may be loaded into the Core yieldWerx database for analysis. This data, combined with test data from wafer sort, final test, and fab parametric test data, enables product engineers to effectively analyze, identify and act on underperforming material across manufacturing and testing environments.

The Reporting & Analysis module includes the following reports which support an engineer in conducting a commonality analysis: PLACEHOLDER.